Abstract

Knowledge extraction through machine learning techniques has been successfully applied in a large number of application domains. However, apart from the required technical knowledge and background in the application domain, it usually involves a number of time-consuming and repetitive steps. Automated machine learning (AutoML) emerged in 2014 as an attempt to mitigate these issues, making machine learning methods more practicable to both data scientists and domain experts. AutoML is a broad area encompassing a wide range of approaches aimed at addressing a diversity of tasks over the different phases of the knowledge discovery process being automated with specific techniques. To provide a big picture of the whole area, we have conducted a systematic literature review based on a proposed taxonomy that permits categorising 447 primary studies selected from a search of 31,048 papers. This review performs an extensive and rigorous analysis of the AutoML field, scrutinising how the primary studies have addressed the dimensions of the taxonomy, and identifying any gaps that remain unexplored as well as potential future trends. The analysis of these studies has yielded some intriguing findings. For instance, we have observed a significant growth in the number of publications since 2018. Additionally, it is noteworthy that the algorithm selection problem has gradually been superseded by the challenge of workflow composition, which automates more than one phase of the knowledge discovery process simultaneously. Of all the tasks in AutoML, the growth of neural architecture search is particularly noticeable.

Similar content being viewed by others

1 Introduction

Despite the huge amount of data available in corporations, there are still a large number of them, specially small- and medium-sized organisations, that do not handle and take advantage of such potential information. Definitely, its analysis could bring valuable insights into their working activity [9]. Extracting useful and novel knowledge for decision-making from raw data is a complex process that involves different phases [12] and requires strong background in multiple fields, such as pattern mining, databases, statistics, data visualisation or machine learning. In general, data scientists are responsible for identifying relevant data sources, automating the collection processes, preprocessing and analysing the acquired data, and communicating their findings to the stakeholders, so that they can propose solutions and strategies to business challenges [27]. The role of these data professionals is crucial in reaching quality decisions, requiring from their experience, intuition and the application of their know-how.

Along the knowledge discovery process, different phases are accomplished, some of which are human-dependent like interpreting the mined patterns. In contrast, others are made up of tasks that are repetitive and time-consuming. Also, it comes into play that the area is progressing fast and the algorithms and methods used for cleaning data or learning from them are becoming more and more sophisticated, and also more and more numerous [21]. In fact, some methods may be better suited than others to the data characteristics and formulation of a particular organisation [24]. This means that automated methods can make it more practicable for the data professional to explore the alternatives that best fit the problem at hand, as well as alleviating those tasks that are more time-consuming. An illustrative example of this situation is model building, where the large number of available machine learning (ML) algorithms makes it difficult to take the right decisions for the construction of the most appropriate models [1, 128, 344]. Here, apart from selecting the right algorithms, their performance could heavily rely on the selected values for their hyper-parameters [4, 229, 364]. In this context, the term automated machine learning (AutoML) [20] was first coined in 2014 to encompass those approaches that are committed to precisely automate these challenging tasks, allowing data scientists to cover a wider spectrum of alternatives, as well as shifting the focus to those phases requiring from their know-how and intuition and, ultimately, bringing the knowledge discovery process closer to domain experts [77, 129]. Since then, AutoML is a rapidly expanding field. Indeed, some AutoML proposals have already shown that they can outperform humans for certain tasks, such as the design of neural network architectures [8, 483].

AutoML approaches can be analysed from multiple perspectives. Different concrete operations or tasks like algorithm selection or hyper-parameter optimisation can be performed to automate the knowledge discovery process. Also, a wide range of techniques can be applied to complete a task. It is common in this field to find studies not explicitly categorised as AutoML, even though they are specifically oriented towards addressing AutoML problems like the design of neural network architectures, the selection of the best preprocessing or ML algorithms [1, 6] and their hyper-parameter values [33, 105], as well as the automatic composition of workflows for knowledge discovery [129, 285, 307]. This might blur the scope, applicability and categorisation of these methods. Because of the growth of AutoML in recent years, other works have explored this field across specific domains of knowledge. Nevertheless, we contend that a comprehensive global review, accompanied by a taxonomy of the field, would provide a holistic overview and situational analysis of the ongoing efforts. This would help identify emerging trends, assess the extent of coverage of different phases of the process, and highlight the dominant techniques being employed in each case. Therefore, this literature review focuses on the breadth of the knowledge area, prior to the requisite deepening of specific categories of tasks, phases or techniques.

More specifically, Luo [26] conducted a review of the various approaches that deal with the selection of the most appropriate ML algorithms and the optimisation of their hyper-parameters. The study mainly focused on the supervised setting and the biomedical environment. With respect to the hyper-parameter optimisation, Hutter et al. [19] examined approaches that use Bayesian optimisation and provided a concise overview of traditional techniques. Furthermore, Elsken et al. [10] reviewed the design of neural network architectures. In terms of automatic workflow optimisation, Serban et al. [31] evaluated the Intelligent Discovery Assistants, which were described as systems that aid users in analysing their data. This comprehensive definition encompasses a vast number of diverse proposals, some of which are related to AutoML. Specifically, the authors assessed a limited number of approaches using AI planning to generate knowledge discovery workflows automatically. Recently, He et al. [16] published a survey covering the automation of various phases of the knowledge discovery process, being specially oriented to the design of neural network architectures. Also, Hutter et al. [20] presented a volume mainly focused on particular tasks and AutoML systems, such as Auto-sklearn, Auto-Net or Automatic Statistician.

To the best of our knowledge, this paper presents the first systematic literature review that provides a comprehensive overview of the research field of AutoML, without any specific emphasis on particular tasks or phases. Considering that this area embraces a broad range of proposals, we first categorise and then analyse them according to the following perspectives: phases, tasks and techniques. Regarding the phases, we discuss to what extent AutoML has covered the knowledge discovery process, as this could help practitioners to identify the appropriate initiative to use at a given moment. As for the tasks, it is interesting to provide insights on how phases have been automated. This information will contribute to understand the level of expertise required by those implementing an AutoML method. We also study those techniques being considered in AutoML and identify possible relationships among them, as well as cross-relations with phases and tasks.

Following a rigorous methodology, we conduct a systematic literature review that starts with an exhaustive search returning 31,048 manuscripts, from which we detected and examined a total of 447 primary studies between 2014 and 2021. All these studies are analysed and filtered according to a precisely defined protocol based on best practices [22]. As a result, we first categorise the main characteristics of current AutoML developments, including the terms referring to the conducted tasks, the covered phases and the most widely applied techniques. These terms are formulated and interrelated in form of a high-level taxonomy that provides a frame to understand how current AutoML studies are organised. The analysis of the primary studies also leads to interesting findings. Just to highlight a few, the qualitative analysis reveals that those phases of the knowledge extraction process considered as inherently human—e.g. the interpretation of results—have been barely considered yet. Also, it has been observed that the increasing complexity of the problems addressed has led to the automation of more than one phase simultaneously. In this vein, algorithm selection is gradually replaced by the composition of knowledge discovery workflows. It is also interesting to note that interesting relationships of techniques with phases and tasks have been found. For example, evolutionary computation and reinforcement learning was widely used by early proposals for the design of neural network architectures, while more recent studies are mainly based on gradient-based methods.

The rest of the paper is organised as follows: Section 2 provides some theoretical background on the knowledge discovery process and the most recurrent tasks within AutoML. The research questions and the review methodology are explained in Sect. 3. Then, in Sect. 4 we introduce our AutoML taxonomy, which serves as a guideline for the analysis of the extracted primary studies. Some relevant quantitative findings are reported in Sect. 5, while Sect. 6 discusses other more qualitative findings from the analysis process. Then, Sect. 7 performs a cross-analysis between phases, tasks and techniques. The conducted analysis has served to identify open issues and future lines of research that are discussed in Sect. 8. Finally, we conclude with Sect. 9.

2 Background

The term AutoML first appeared in 2014 to refer to those approaches automating the machine learning process. In the first instance, it cannot be attributed to a single author or reference, but to a complete community. More precisely, the first occurrence was in the AutoML workshop, which has been held jointly with the International Conference on Machine Learning (ICML) for the last years (2014–2021). Recently, this workshop has been transformed into the AutoML conference in 2022. To the best of our knowledge, the first papers explicitly referring to AutoML were published in 2015 [14, 129]. Regardless of these dates, notice that some problems addressed by AutoML have been studied for decades, such as the selection of the best ML algorithms and the tuning of their respective hyper-parameter values.

As mentioned above, AutoML aims at automating the different phases of the knowledge discovery process (Sect. 2.1) by performing some particular tasks (Sect. 2.2) in different ways. AutoML is not only intended to be useful by both domain experts and data scientists. Indeed, certain tasks like hyper-parameter optimisation or neural architecture search (NAS) are intended to improve the performance of the generated models, even though they still require some prior knowledge on the applicable algorithms and their hyper-parameters. In this context, Olson et al. [307] stated that AutoML is not a replacement for data scientists nor ML practitioners, but a “data science assistant”. Similarly, Gil et al. [13] proposed the development of interactive AutoML approaches that leverage the experience and background of both domain experts and data scientists, as they can complement and constrain the available data and solutions to their particular needs and requirements.

2.1 Phases of the knowledge discovery process

Different methodologies, each with its own phases, have been proposed to conduct the knowledge discovery process. A representative example is the Knowledge Discovery in Databases (KDD) process that was originally described by Fayyad et al. [12]. It is an iterative and interactive process whose cornerstone is the data mining phase, which aims at extracting patterns from large databases. This process starts by studying the application domain and any prior knowledge relevant to the process. Then, a target dataset is built by focusing on a subset of variables, or data samples, on which the discovery is to be performed. To ensure its quality, a number of data cleaning and transformation methods is applied. Once the dataset has been properly preprocessed, the data mining phase is conducted and the resulting patterns are evaluated. In this context, different data mining methods are identified: classification, regression, clustering, summarisation, dependency modelling or outliers detection, among others. Finally, the derived knowledge is documented, reported and incorporated to another system for further actions like decision-making.

Alternatively, the CRoss-Industry Standard Process for Data Mining (CRISP-DM) was defined by Chapman et al. [7]. This process, which is specially designed for industry, consists on a cycle encompassing six phases. The first two phases, business understanding and data understanding, are closely related to the domain understanding in KDD. In a third phase, raw data is subject to a set of transformations to improve its quality, thus helping to deploy better models during the fourth phase, which is equivalent to the data mining phase defined by KDD. The resulting models are thoroughly evaluated and the previous steps are revisited if needed, until the business objectives are met. Finally, the acquired knowledge is organised and presented to the customers. Closely related, the Sample, Explore, Modify, Model, Assess (SEMMA) [2] is another process consisting in a cycle with five stages, as referred by the five terms of the process name. In short, these stages can be seen as equivalent to those defined by KDD or CRISP-DM. Notice that all these methodologies are analogous with respect to the phases they involve, the data mining phase being their cornerstone.

2.2 Tasks in AutoML

A preliminary analysis of the manuscripts compiled by the aforementioned surveys and reviews revealed the existence of a set of common operations or tasks conducted in the field of AutoML, namely hyper-parameter optimisation, neural architecture search, algorithm selection and workflow composition. This section briefly introduces these tasks.

2.2.1 Hyper-parameter optimisation and neural architecture search

Most ML algorithms have at least one parameter (a.k.a. hyper-parameter) controlling how they behave. Illustrative examples are the kernel of a support vector machine or the maximum depth of a decision tree. Not properly tuning such hyper-parameters can greatly hamper the performance of these algorithms. In this context, the hyper-parameter optimisation (HPO) task aims at automatically selecting the hyper-parameters values that maximise the performance of a given algorithm [4]. As this problem has been studied for decades, a large number of techniques have been applied like grid search, random search, evolutionary algorithms, racing algorithms or Bayesian optimisation, among others. Hutter et al. [19] reviewed the HPO problem focusing on Bayesian optimisation and giving a brief overview of the traditional techniques. They also analysed some approaches focused on reducing the number of hyper-parameters and discussed how to combine them with traditional HPO approaches.

Recently, the availability of new scalable HPO approaches [323] has supported the development of deep neural networks, which have achieved a great success in various application domains like speech [17] and image recognition [15]. This has led to the emergence of the field known as neural architecture search, which is an instance of the HPO problem aiming at simultaneously optimising the architecture and hyper-parameters of the artificial neural networks [10]. It is worth noting that these automatically designed neural networks are comparable or even outperform hand-designed architectures [8, 483]. Elsken et al. [10] published a survey that categorises the NAS approaches according to three dimensions: search space, search strategy and performance estimation strategy. More recently, a survey mostly covering NAS, as well as some preprocessing steps of the knowledge discovery process, has appeared in the literature [16]. Regarding the NAS approaches, they are categorised according to their search space, optimisation technique and model evaluation method. As for the preprocessing, it considers both data preparation and feature engineering methods.

2.2.2 Algorithm selection

It is well known that there is no single algorithm that outperforms the others for all the available problems [36]. In the context of ML, algorithms are based on a set of assumptions about the data, i.e. their inductive bias, what makes some of them more suitable for a particular dataset. Rice [29] formalised the algorithm selection problem (AS) with the aim of selecting the best algorithm for each situation and thus limiting the aforementioned shortcoming. It is usually treated as a ML problem where the dataset characteristics, e.g. the number of attributes and classes, are used to predict the expected algorithm performance, which could be measured by its accuracy. The aim is to predict whether an algorithm—or a set of algorithms—is suitable for a specific dataset. Recently, Luo [26] reviewed a number of proposals dealing with the AS and/or the HPO during the data mining phase. More specifically, it is focused on supervised learning, i.e. classification and regression. Similarly, Tripathy and Panda [35] reviewed those proposals that exploit meta-learning techniques to carry out the selection of the best data mining algorithm. It is worth noting that the AS problem has also been considered in other application domains like the combinatorial optimisation search, which was reviewed by Kotthoff [23].

A related task is the Combined Algorithm Selection and Hyper-parameter optimisation (CASH). It was defined by Thornton et al. [34], who applied a Bayesian optimisation algorithm to jointly address the AS and HPO problems. This was achieved by treating the selection of the ML algorithm as an hyper-parameter itself, thus building a conditional space of hyper-parameters where the selection of a given algorithm triggers the optimisation of other certain hyper-parameters. In addition, assigning specific values of certain hyper-parameters may trigger the optimisation of other hyper-parameters. Previous to its formalisation, this problem was already addressed by Escalante et al. [11] with particle swarm optimisation.

2.2.3 Workflow composition

While the AS and the HPO provides a valuable assistance during the knowledge discovery process, their use is rather limited to data mining phase [6]. However, selecting and tuning the best algorithm for each phase independently could hamper the performance and even generate invalid sequences of algorithms. There are relationships, synergies and constraints between such algorithms that should be thoroughly examined to reach a pipeline with a good performance. Therefore, some authors have begun to propose methods that can automatically compose knowledge discovery workflows involving two or more phases of the process. These proposals rely on different techniques such as particle swarm optimisation [11], Bayesian optimisation [34, 129], evolutionary computation [307] or automated planning and scheduling [285].

As can be inferred from the applied techniques, workflow composition has been mainly addressed as an optimisation problem. This poses a number of new challenges related to the increase of the search space. Apart from optimising the structure of the workflow itself, the best algorithms should be selected for each phase as well. This is even more challenging when the hyper-parameters of these algorithms need to be considered. To address this problem, some proposals fix the workflow structure in advance to generate simpler workflows [30, 34, 129, 285] or give preference to the shorter ones during the optimisation process [307]. Although not specifically oriented towards the workflow composition problem, Serban et al. [31] reviewed a number of intelligent discovery assistants, which are systems that advise users during the data analysis process. Among them, they identified a set of approaches using automated planning and scheduling techniques to automatically compose ML workflows [5, 37]. More recently, Hutter et al. [20] analyse in detail some AutoML tools performing this task.

3 Review methodology

As an approach for the development of this study, we have followed the guidelines by Kitchenham and Charters [22]. They provide a rigorous and structured approach to identifying and summarising all relevant research on a particular topic, ensuring that the review process is transparent and minimises potential sources of bias. These guidelines can assist us in conducting our study in a manner that is replicable by other researchers, which is essential for building a reliable body of knowledge in the field of AutoML. Note that this approach was created according to the experience of domain experts in a variety of disciplines interested in evidence-based practice, and has undergone several revisions since it was first published. In this way, they have gradually refined a process that leads to high-quality SLRs by carrying out several steps that researchers should follow. In general, the review process comprises three main steps, namely planning, conducting and reporting. In terms of the planning step, it involves identifying the need for the review, which is motivated in Sect. 1, and defining the research questions (see Sect. 3.1) along with creating a review protocol. The conducting step involves identifying a wide range of primary studies pertaining to the research question and then selecting those that are most relevant (see Sect. 3.2). The quality of primary studies is evaluated, and to prevent any potential biases, the decision is solely founded on the source of the primary studies. Upon obtaining the primary studies, data extraction forms are developed to record the information accurately that researchers obtain from them (see Sect. 3.3), and later synthesised. Finally, the reporting step involves specifying mechanisms for dissemination and formatting the main report. To ensure replicability, the review protocol, as well as the outcomes of both the search and review processes, is available as supplementary material.

3.1 Research questions

This paper aims at responding to the following research questions (RQs):

In recent years, there has been a significant increase in the number of papers on AutoML. However, many of these papers are not explicitly classified as AutoML, which could lead to a blurred understanding of their scope, applicability and categorisation. The lack of a common terminology can also lead to confusion and inconsistencies in the field, hindering progress and collaboration. Therefore, we envisage that the establishment of a common taxonomy will assist researchers and practitioners in understanding and communicating each other’s work more precisely and consistently, ultimately advancing the field of AutoML.

The aim of this research question is to examine the quantitative evolution of the field of AutoML. The rationale behind this lies in the fact that, while some AutoML problems, such as AS and HPO, have been studied for decades, the term AutoML as a comprehensive discipline was only introduced in 2014. By answering this question, researchers and practitioners will gain insight into the rate at which new studies are being published. Understanding the quantitative development of AutoML can also assist in identifying emerging trends and patterns in research, as well as potential areas for future work.

The knowledge discovery process is a fundamental aspect of data mining and involves several key phases. By analysing the different tasks performed in AutoML, researchers and practitioners can gain a better understanding of how each phase of the knowledge discovery process is being addressed in the field, and what techniques are being applied. This information can help categorise current and future proposals, identify strengths and weaknesses in the current approaches, and suggest potential areas for improvement or future research. In addition, a cross-category analysis can assist in identifying the relationships and synergies among phases, tasks and techniques.

Given that AutoML is a broad and still emerging field of research, the rationale behind this research question is to identify emerging trends and open gaps in the field of AutoML for future research. This can help guide future research directions and ensure that research efforts are focused on areas that are most likely to lead to significant advances in the field. Also, this information can help guide researchers and practitioners towards important research areas and facilitate the development of more effective and efficient AutoML systems. Overall, this research question can provide valuable insights into the current state of AutoML research and future directions for the field.

3.2 Literature search and selection strategy

The first step consists in the automatic literature search by querying the following digital libraries and citation databases: IEEE Xplore, ScienceDirect, ACM Digital Library, SpringerLink, ISI Web of Knowledge and Scopus. Table 1 shows the three final search strings executed. From the output returned by a initial version of these search strings, we conducted an analysis to define the taxonomy that depicts the main characteristics of current AutoML approaches. This initial version of the taxonomy serves to refine these pilot search strings as well. For example, the strings are completed with sets of tasks and phases being used to determine a list of terms guiding the search. As a strategy, the search strings #1 and #2 seek to cover the main tasks of AutoML, while string #3 is more general, and includes those studies considering the automation of any phase composing the knowledge discovery process. In this way, we avoid limiting the search to the main AutoML tasks, thus offering a wider picture of the area. As shown in Fig. 1, a total of 31,048 papers are returned by these queries before removing duplicates and applying the following exclusion criteria:

-

1.

Papers prior to the appearance of the term AutoML, i.e. 2014, are not considered.

-

2.

Papers that are not written in English are not considered.

-

3.

Papers with an unclear evidence of a blinded, peer-review process are not considered.

-

4.

Papers that are not published in conferences ranked A* or A according to the CORE ranking systemFootnote 1 are not considered.

-

5.

Papers published in journals not indexed in JCR are not considered.

Once the filter was applied, we obtained a total of 10,596 candidate papers. It is important to mention that the first three criteria could be integrated into the search strings, depending on the specific query format of each database (see the review protocol in the supplementary material for further details). In order to make the results from the various digital libraries and citation databases consistent and to aid in the cleaning and homogenisation of the retrieved data, a set of Python scripts were developed. For this purpose, we invoked the bibtexparser and pybliometrics packages. Next, the titles and abstracts of candidate papers are double-checked to ensure that they meet the following inclusion criteria. Firstly, the automation must be defined for a phase of the knowledge discovery process and the contribution must be focused on making a real progress in the field of AutoML, not as much on the specific artificial intelligence methods applied. Additionally, the proposal must be independent of a specific dataset, and could be of a theoretical nature.

The application of these inclusion criteria reduces the number of selected papers to 404. Also, variants of a paper presenting similar results—or a subset of the results—without a clearly differentiated contribution are removed. Note that extended versions in journals prevail over conferences. The identification of these variants concludes with 392 primary studies, which were complemented by a snow-balling procedure consisting of checking cross-references and detecting papers potentially omitted by the automatic literature search. More precisely, each referenced paper is treated as a potential primary study as if it had been obtained through the search strings. Then, inclusion and exclusion criteria are applied as already described. To avoid the exponential growth of primary studies, we limit the snow-balling procedure to one level. This is, it is not applied to primary studies obtained through it. As shown in Fig. 1, 447 primary studies were finally selected for the data collection and review process.

3.3 Data collection process

Next, we conduct the review process by thoroughly analysing the primary studies. With this aim, these papers are randomly distributed among the three authors according to the following rules: (1) every paper should be analysed by at least two authors; (2) there should be a balance among the reviewers with respect to the number of papers, their source (i.e. journal, conference) and the search string they are related to. Following these rules, all authors fill a data extraction form for each paper. Then, filled forms concerning the same paper are compared to mitigate any possible error during the review process. In the event of discrepancies, the third author is contacted to reach a consensus form. It should be noted that all papers have been objectively reviewed under strict control conditions, and no other information is inferred during the extraction process. Therefore, all data being reflected in the extraction forms is explicitly collected from primary studies. Consequently, data may be missing for some categories in some of the primary studies analysed. Notice that the authors of the papers are not contacted in order to avoid any type of subjectivity or bias.

For each primary study, comprehensive information is collected for classification regarding the phases of the knowledge discovery process being automated, the accomplished tasks and the applied techniques. In addition, information on the experimental framework and the additional material available—mostly related to replicability—is analysed too. These categories are further divided into more specific properties aligned with the taxonomy developed in Sect. 4. A more detailed description on the data extraction form, including these properties and their possible values, can be found in the additional material. Together with this information, we also retrieve a brief summary of the primary study, as well as a brief overview of the future work reported by the authors. In terms of meta-information, each primary study is annotated with the following identification data: (a) author(s) name; (b) title; (c) year of publication; (d) type of publication, i.e. conference or journal; (e) name of publication and publisher; (f) volume, issue and pages; and (g) its DOI.

4 A taxonomy for AutoML

In response to RQ1, Fig. 2 shows our taxonomy, which reflects the main classification scheme obtained from the analysis of current AutoML approaches. Our taxonomy is constructed on the basis of the three core AutoML terms: (1) the phases of the knowledge discovery process being automated; (2) the tasks conducted to perform such automation; and (3) the techniques carrying out these tasks. As explained in Sect. 3, a preliminary version of this taxonomy was first drafted from the pilot literature search and then iteratively refined along the review process as primary studies have been examined. The resulting taxonomy represents the current state of the art of the field. In Fig. 2, those terms followed by an asterisk symbol (\(*\)) denote extension points, i.e. those points in the classification scheme where it can be expected to extend the terminology as the number of studies in the area increases. It is worth noting that this taxonomy is not intended to provide an exhaustive list of terms of the area, but a categorisation scheme for AutoML proposals.

As pointed out in Sect. 2.1, there are different methodologies to conduct the knowledge discovery process. They all define a number of phases that are likely to be automated. Here, KDD, CRISP-DM and SEMMA have been considered in this taxonomy as the most general models from which to extract the different phases. Thus, we distinguish between Preprocessing, Data mining and Postprocessing. These three top categories are further divided into more specific terms. The preprocessing encompasses those phases that are performed prior to the generation of models, such as Domain understanding, Dataset creation, Data cleaning and preprocessing and Data reduction and projection. Regarding the data mining phase, both Supervised, i.e. Classification and Regression, and Unsupervised learning, i.e. Clustering and Outlier detection, are considered. Note that only those categories found in the literature are explicitly considered in the taxonomy, without limiting the possibility that future studies may include other types of proposals, such as pattern mining under Unsupervised approaches. Finally, postprocessing includes the Knowledge interpretation and the Knowledge integration phases, which act on the knowledge discovered during the previous phases.

There is a set of Tasks usually conducted in the field of AutoML, which are referred as Primary tasks in our taxonomy. In contrast, Secondary tasks comprise those problems that have been studied to a lesser extent in the field. Recurring primary tasks are Algorithm selection and Hyper-parameter optimisation, which aim at selecting the most accurate ML models, i.e. Model selection. Regarding AS, we differentiate between studies recommending a Single algorithm or several algorithms, either an Unordered set of recommendable algorithms or a Ranking that explicitly indicates the best ones. As for HPO, this category can be further decomposed into Algorithm-dependent and Algorithm-independent proposals, depending on whether they are designed to tune the hyper-parameters of a specific algorithm—or family of algorithms—or not. A representative example of the first group is Neural architecture search, which optimises both the architecture and the hyper-parameters of artificial neural networks. Furthermore, we define Workflow composition as a specific model selection problem, where different algorithms, and optionally their hyper-parameters values, are selected. This task can be further decomposed according to whether the generated workflows follow a Fixed template or a Variable template. It is worth noting that these tasks require a set of algorithms, so they are only applicable if a catalogue of alternative algorithms that address them is already available, for example, contrary to what happens in cases such as the knowledge interpretation. Focusing on the secondary tasks, we distinguish between Supporting and Ad hoc tasks. On the one hand, supporting tasks refer to those that are closely related to primary tasks and are usually aimed at assisting them. As an example, recommending whether it is worth investing resources in tuning the hyper-parameters of a ML algorithm may accelerate the HPO process. On the other hand, ad hoc tasks are those specifically designed for the phase being automated, e.g. generating a report explaining in natural language a ML model.

Finally, primary studies have shown a wide range of Techniques applied to conduct AutoML tasks. Notice that applying two or more techniques simultaneously to address a single task is a common practice. Techniques can be mostly categorised into three groups: Search and Optimisation, Machine Learning and Others. Regarding Search and Optimisation, there are various techniques available such as Bayesian optimisation, Evolutionary computation, AI Planning, Gradient-based methods, and Multi-armed bandit to conduct tasks such as hyper-parameter optimisation. Additionally, in Machine Learning, it is noteworthy how ML techniques are used to conduct specific AutoML tasks. For instance, Meta-learning is commonly used to develop models for AS. Transfer learning involves reusing knowledge gained from solving a problem to tackle a related one and is often used in conjunction with other techniques. Reinforcement learning, which focuses on how agents should act in an environment to maximise rewards, is typically employed to design neural network architectures. Lastly, the Others category encompasses techniques that are scarcely used in primary studies and do not fall under any of the previous categories. It should be noted that, due to space limitations, the taxonomy covers specific techniques within these categories based on how frequently they appear in current AutoML studies. We expect to expand these categories as new proposals emerge.

5 Quantitative analysis

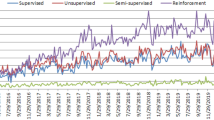

In response to RQ2, this section performs a quantitative analysis of the primary studies. Firstly, the number of contributions per year and their distribution by type of publication (conference or journal) has been calculated. The idea is to identify current publication trends and check how AutoML evolves in different ways. Figure 3 shows the number of primary studies published in conferences and journals per year since the appearance of the term AutoML. For each year, the distribution of papers focused on the different AutoML tasks (see our taxonomy in Sect. 4) is depicted, where the last column shows the total amount of papers for this year. Notice that the category HPO has been decomposed into algorithm-independent tasks, HPO(indep), and algorithm-dependent tasks, HPO(dep). All HPO tasks together with algorithm selection (AS) and workflow composition (WC) sum those papers related to the model selection primary task. Papers on secondary tasks (ST) are also calculated. As can be observed, the number of primary studies published between 2014 and 2017 remains fairly steady. However, since 2018 the number of papers has increased significantly, mostly as the number of works related to HPO(dep) also grows. This is due to a large increase in the number of NAS proposals. Also, algorithm selection has traditionally attracted more attention than workflow composition. This is the case until 2020, when we can speculate that there could be a change in trend due to an improvement in computational resources, what offer the possibility of executing workflow composition proposals that include part of the AS and HPO tasks. In the particular case of algorithm-independent proposals, HPO(indep), its number has been larger than for HPO(dep) until 2017. As for the studies focused on secondary tasks, its number is still limited even when it involves both supporting and ad hoc tasks. Finally, notice that the number of journal papers is notoriously greater than publications in conferences in 2021. However, we speculate that it could be due to the COVID-19 situation.

Regarding the type of publication, 55% of primary studies have been submitted to conferences, while 45% have been published in JCR journals. Also, observed that, following our search protocol, only top conferences (A* and A) are considered in the analysis. We can find 57 different conferences, including the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) (38 primary studies), the International Conference on Machine Learning (ICML) (20), the International Joint Conference on Neural Networks (IJCNN) (18), the European Conference on Computer Vision (ECCV) (16) and the Genetic and Evolutionary Computation Conference (GECCO) (15). As for the journals, the largest number of primary studies is published in Neurocomputing (19 papers), IEEE Access (17), IEEE Transactions on Pattern Analysis and Machine Intelligence (11) and IEEE Transactions on Neural Networks and Learning Systems (10). In general, no particular preference in terms of tasks or phases of the knowledge discovery process has been observed for one publication or the other. However, we observe that algorithm-dependent HPO approaches, which mainly include NAS-specific proposals, are commonly submitted to publications more focused on artificial neural networks or their applications like CVPR (37 primary studies), Neurocomputing (15), ECCV (14) or IJCNN (11). Other conferences like GECCO have also accepted NAS-related papers (10), since they commonly apply evolutionary algorithms. Similarly, the largest number of WC studies is also found in GECCO (4) as well. As for algorithm-independent HPO approaches, ICML (13) and the International Conference on Artificial Intelligence and Statistics (AISTATS) (9) have attracted the majority of primary studies.

In average, each primary study in this area is co-authored by 4 researchers from 2 distinct institutions. Altogether, over 1000 authors have been identified, most of them having participated in just one primary study. As for international collaborations, the emergence of international networking groups such as the COSEAL (COnfiguration and SElection of ALgorithms) groupFootnote 2 is noteworthy. Figure 4 shows the relationships among collaborating institutions. In this graph, nodes represent affiliations, while their size depicts the number of contributions. The edges indicate collaborations. However, due to space limitations and for the sake of readability, we do not show affiliations with less than 6 contributions. As can be seen, the institutions that have co-authored most primary studies are: Google LLC (16 papers in collaboration with others), Carnegie Mellon University (15), Massachusetts Institute of Technology (13), Deakin University (13), Tsinghua University (12) and University of Chinese Academy of Sciences (11). Of these, the Beihang University (20), Tsinghua University (18), University of Chinese Academy of Sciences (18) and Tencent (18) have been more proactive in collaborating with other institutions. Other affiliations with a large number of collaborations are Massachusetts Institute of Technology (16), Peng Cheng Laboratory (15), and Hong Kong University of Science and Technology (15).

6 Findings of the review process

In response to RQ3, the analysis presented next, which is founded on the proposed taxonomy in Sect. 4, covers the phases automated (Sect. 6.1), the tasks conducting such automation (Sect. 6.2), and the techniques applied (Sect. 6.3). We have also examined the experiments carried out in the primary studies, as well as their available additional materials (Sect. 6.4). Notice that, due to space limitations and for the sake of readability, Tables 2, 3 and 4 use an identifier for each study whose respective reference can be found in the Primary Studies bibliography.

6.1 Automated phases

Table 2 shows the phases of the knowledge discovery process that have been automated by the primary studies. Notice that AutoML has not covered yet the automation of the entire process. Even so, a number of primary studies (13%) automate more than one phase. This is particularly common in—but not limited to—proposals that automate the preprocessing operations, usually together with the automation of the data mining phase. In general, not all phases are equally distributed, with the data mining phase receiving the most attention from the AutoML community with up to 93% of primary studies. Although to a lesser extent, AutoML proposals have also faced the automation of the preprocessing (14%) and postprocessing (1%) phases.

As for the preprocessing, the domain understanding phase is tackled by some proposals that support data scientists during data exploration [107, 111, 118, 168, 263, 287, 402]. Regarding the dataset creation problem, it has only been considered by a limited number of proposals [106, 268]. Observe that more than 81% out of the studies about preprocessing are focused on cleaning and transforming the resulting datasets. More specifically, 48% of studies refers to data cleaning and preprocessing, and typically deal with data scaling [331, 343, 375, 428, 450, 473], missing values handling [106, 129, 273, 445] or both [61, 69, 122, 158, 207, 285, 297, 333, 356, 361, 408]. Note that, with the exception of [59, 62, 74, 140, 278], these proposals are not exclusively dedicated to these particular activities, but they also cover the data mining phase. Data reduction and projection are also addressed (79%). In this case, some primary studies address the feature engineering [50, 119, 203, 206, 264, 293, 341, 390, 461]. A reduced number of studies are particularly devoted to feature selection [148, 316, 317]. As mentioned above, most of these primary studies automate some data mining phase too, such as classification [61, 122, 129, 285, 295, 297, 307, 331, 343, 375, 395, 408, 445, 450], regression [61, 207, 212, 273, 297, 450] and clustering [484]. The same happens with feature extraction, which is automated together with the data mining phase [61, 69, 74, 91, 93, 122, 129, 135, 158, 200, 297, 307, 311, 343, 356, 361, 375, 377, 408, 428, 450, 482, 484]. Just a few studies [59, 74, 393] are an exception in this case.

Turning specifically to the data mining phase, we should take into account whether it is an HPO proposal or not, since the former are usually independent of the data mining technique being conducted. Thus, these studies have been classified according to the data mining problem(s) being addressed in their experimental validation. They are shown in Table 2 as a separate list of references marked with the symbol \(\dagger \). Independently of the AutoML task carried out, we can observe that the automation of supervised learning, i.e. classification and regression, has attracted most of the attention (91% and 14%, respectively) compared to unsupervised techniques like clustering and outlier detection (2% and<1%). In fact, to the best of our knowledge, other unsupervised problems, such as pattern mining or association rule mining, have not been explored yet in this field. As motivated by Ferrari et al. [128], most problems addressed with unsupervised methods lack a previously known solution set. Regardless of this point, most studies automating data mining are related to HPO. However, they rarely give support to more than one phase. The same does not apply for the studies addressing other AutoML tasks. Among them, focusing on supervised learning, proposals automating classification or regression also consider some preprocessing methods (44% and 56%, respectively). More specifically, some automate the data reduction and projection phase before building the classification [57, 135, 212, 295, 484] and regression [212] models. The data cleaning and preprocessing has been considered too (83%), but always together with data reduction and projection, only with the exception of [294]. In this case, they have been applied before the classification [61, 69, 121, 122, 129, 158, 204, 207, 273, 285, 297, 307, 311, 331, 333, 343, 356, 361, 375, 408, 428, 445, 473] or regression [61, 69, 207, 273, 297, 311, 361, 473] algorithms. Regarding the unsupervised learning, no primary study has considered any preprocessing method with the only exception of [484], which automates the data reduction and projection before building the clusters.

Finally, the actions performed during the postprocessing phase are inherently human in nature, requiring a certain amount of experience, know-how and intuition. As a consequence, barely 1% of the primary studies automate this part of the knowledge discovery process. In particular, we found proposals dealing with the knowledge interpretation [248, 389] and knowledge integration [72] phases.

6.2 AutoML tasks

Automation can be conducted in different ways, depending on the operation or task performed (see Sect. 2.2). Following our taxonomy, Table 3 lists the studies that carry out some primary or secondary task(s). As can be observed, there is a large difference in the number of contributions referring to each task, with HPO attracting by far the most attention (77% of primary studies). In contrast, a smaller number of proposals conduct secondary tasks (11%), from which 7% are dedicated to support one of the primary tasks, i.e. AS, HPO or WC.

Regarding the primary AutoML tasks, 9% of the reviewed manuscripts perform algorithm selection, i.e. they recommend the best preprocessing or ML algorithms for a given dataset. Despite AS has been largely studied, recent proposals have started to address new problems like clustering [128, 324, 325, 398] or data stream forecasting [344]. Regardless of the automated phase, the most common strategy is to recommend a single algorithm (57%), but other approaches can also select an unordered set (20%) or a ranking (30%) of the best algorithms. Notice that some studies conduct more than one type of recommendation. On the other hand, HPO covers 77% out of the primary studies. According to our taxonomy, these proposals might be divided into two groups depending on whether they are independent of the algorithm whose hyper-parameters are tuned (25%) or not (75%). Also, NAS proposals, which represent the majority of studies (70%), have been differentiated from the rest of HPO algorithm-dependent approaches. It should be noted the existence of approaches optimising neural networks without considering their architecture [40, 189, 251, 266, 310, 386, 393, 475]. In addition, there are approaches optimising clustering algorithms [327, 398] and kernel-based algorithms, such as support vector machines [76, 283, 289, 343, 401], graph kernels methods [275], or conditional mean embeddings [167].

Regarding the primary tasks, workflow composition involves 8% of primary studies. Again, this task can be further decomposed into two categories depending on whether the workflow structure has been prefixed in advance (37%) or not (63%). Regardless of this subcategory, all these proposals choose between different alternative algorithms like decision tree or SVM in order to perform each step of the workflow, e.g. classification, with the exception of [71] that only optimises hyper-parameters. Most of these WC proposals optimise a workflow composed of one or more preprocessing methods and a data mining algorithm. In contrast, other approaches [57, 77, 433] only consider classification algorithms, thus defining a classifier ensemble as a type of workflow outcome. Notice that some proposals have not considered the optimisation of hyper-parameters [57, 69, 207, 484], even though some recognise it as an interesting future work [295].

Of the 11% of studies that refer to secondary tasks, 66% explore a supporting task. For those supporting AS, some proposals identify the best meta-features describing a dataset [58, 109, 326], build a portfolio of algorithms for a posterior selection [164], reduce the execution time of the task [346], or assess the quality of an algorithm [278]. As for the case of supporting HPO, these approaches have been focused on recommending whether it is worth investing resources in the task [269, 270, 347, 392], predicting whether a given configuration will lead to better results [329, 335, 391], identifying the most relevant hyper-parameter to be tuned [260, 339, 376], distributing the optimisation process in a cluster [453], or looking for speeding up the optimisation [67, 180]. Also, there are other approaches intended to assist NAS by shrinking the search space [174], predicting the performance of an ANN architecture [301, 383] or ensuring diversity during the search [171]. Finally, with respect to WC, some approaches aim at recommending the best knowledge discovery workflow [207, 428], speeding up the evaluation of workflows [298], improving interpretability [422], or generating a complete search space from a partially defined one by adding new preprocessing and ML algorithms [68]. On the other hand, ad hoc tasks suppose 34% of all primary studies covering a secondary task. Under this category, both preprocessing and postprocessing are considered. With respect to the former, there are approaches aimed at creating tabular datasets [106, 268], generating data visualisations from the datasets [111, 402], fixing data inconsistencies [62] and generating new features [50, 119, 203, 206, 264, 293, 341, 390, 461]. As for the postprocessing step, approaches explaining the resulting ML models [248, 389] or generating their source code [72] have been proposed as well.

6.3 Applied techniques and methods

Table 4 compiles the techniques that have been applied in primary studies to carry out their respective AutoML tasks of interest. These techniques are divided into two well-distinguished categories, search and optimisation (74%) and machine learning (41%), apart from other proposals that do not fall into any of these groups (12%). Also, it is worth noting that only the main techniques of each study are categorised.

Focusing on the search and optimisation category, evolutionary algorithms [3] are the most frequent techniques (35%). Among them, genetic algorithms [40, 60, 63, 74, 75, 78, 88, 96, 100, 102, 104, 125, 127, 151, 153, 169, 193, 201, 210, 214, 221, 230, 244, 249, 256,257,258,259, 265, 271, 284, 286, 292, 309, 311, 315, 336, 348, 358, 360, 366, 370, 378, 379, 384, 400, 401, 406, 439, 454, 458, 475], grammatical evolution [41, 49, 51, 105, 121, 122], evolution strategy [74, 144, 451, 479] and differential evolution [74, 332, 351] are the preferred alternatives. Also, it is common to find other approaches based on genetic programming: canonical genetic programming [57, 211, 307, 337, 390], Cartesian genetic programming [119, 371, 372], grammar-guided genetic programming [345] and strongly typed genetic programming [484]. Meta-heuristic methods like swarm intelligence (6%) and greedy search (3%) are also applied. Particularly, all the proposals based on swarm intelligence rely on particle swarm optimisation (PSO) algorithms, with the exception of [183, 192, 289] that are founded on the behaviour of ants, bats and bees, respectively. Also, note that variants for multi-objective optimisation of these methods have been applied, more specifically to genetic algorithms [60, 63, 78, 88, 153, 169, 244, 249, 256,257,258,259, 271, 311, 348, 400], genetic programming [307, 484] and PSO [93, 200, 283, 404], among others. Other methods like gradient-based methods (18%), multi-armed bandit (7%), random search (4%) and AI planning (2%) can be found among the primary studies. Finally, other search and optimisation techniques include bilevel programming [134], racing algorithms [464] or coordinate descent [98, 448], among others.

Sequential model-based optimisation [32] is the second leading technique (31%). These studies can be categorised according to their surrogate model and acquisition function. As for the surrogate model, Gaussian processes are used by most proposals (48 out of 94). Other common alternatives are artificial neural networks [116, 242, 321, 350, 362, 364, 367] and random forest [60, 70, 71, 129, 144, 204, 212, 267, 273, 297, 373, 398]. Regarding the acquisition function, most studies apply expected improvement (41 out of 94). In this case, the upper confidence bound [103, 194, 198, 202, 231, 299, 300, 385], lower confidence bound [60, 71, 304] and Thompson Sampling [162, 276, 373] are also applied. In general, sequential model-based optimisation methods evaluate a single hyper-parameter configuration at each iteration, although some approaches evaluate a batch of them [108, 110, 146, 194, 202, 299, 354, 369, 436]. Finally, it is worth noting the existence of studies that are specially committed to the definition of new surrogate models (8 out of 94) and/or acquisition functions (25 out of 94).

As for the machine learning category, transfer learning is the most recurrent technique (48%). Nevertheless, it is often used in conjunction with others techniques, such as gradient-based methods (34 out of 87), reinforcement learning (17) and evolutionary algorithms (17). Meta-learning, together with reinforcement learning, is the second leading technique (24%). These proposals can be divided according to the method that is used at the meta-level. Here, classification algorithms, such as decision trees [140, 205, 269, 270, 316, 317, 326], neural networks [99, 168, 213, 269, 287, 293, 317], random forest [95, 203, 269, 270, 317, 324, 326, 344], support vector machines [99, 196, 269, 270, 326], naive bayes [269, 270, 344], and k-nearest neighbors [67, 99, 267, 269, 270, 324, 411], are the preferred alternatives. Also, other regression algorithms, such as random forest [58, 59, 329], support vector regression [338], decision trees [329], Gaussian processes [270, 393] or XGBoost [397], are considered. In fact, Sanders et al. [347] consider both classification and regression algorithms during the learning. Although to a lesser extent, clustering algorithms [325, 442] have been used for the recommendation too. There are methods [38, 128, 340] that take advantage of the similarities between datasets—measured by a set of meta-features—without relying on the aforementioned data mining methods. Other recurrent ML approach is ensemble learning (3%), which is commonly applied to combine the best models found during AS [205, 342], NAS [51, 131, 482] or WC [129, 433]. Finally, others ML techniques (16%) include a variety of techniques like inductive logic programming [389] or multiple kernel learning [275], among others.

In Table 4, the category “Others” includes those proposals not applying neither SO nor ML techniques. It should be noted the existence of a wide range of techniques being applied by these proposals like collaborative filtering [135, 207], model checking [278], case-based reasoning [97], data envelopment analysis [401], conditional parallel coordinates [422] or fractional factorial design [260], among others. As the study of the area progresses, we envision that this category may be reformulated or broken down into other categories and integrated into the taxonomy.

6.4 Experimental framework and additional framework

Presenting an experimental framework to assess the validity of the proposed approach is a common practice in most primary studies (99%). In this section, we analyse the main components of the experimentation, including the type of case study, the comparative framework or the use of statistical validation. Empirical studies, i.e. those setting a hypothesis and constructing a method to qualitatively or quantitatively validate it, and discussing findings that support such a hypothesis are a consolidated practice in the area (98%), in contrast to purely theoretical proposals (1%) or the use of sample executions (1%). A combination of a sample execution with some empirical validation can be found too [292].

In terms of the case study used for the experimental validation, there is a clear preference for those benchmarks and datasets obtained from the literature (98%). The UC Irvine Machine LearningFootnote 3 and the OpenMLFootnote 4 are well-known and referenced repositories, as well as the CIFAR datasetFootnote 5 in the case of NAS proposals. In contrast, only 6% out of the primary studies generate their synthetic dataset. In fact, notice that, with the exception of [161, 196, 350, 357, 428], these studies combine their own datasets with others taken from the literature. Regardless of the datasets being used, the analysis of the comparison framework reveals that 93% of the primary studies compare their proposals against other approaches from the literature. In this vein, AutoML approaches are the preferred option (69%) for comparison. However, a large number of primary studies compare their results against other proposals not belonging to this specific area. For example, NAS approaches can be compared against hand-crafted architectures. Furthermore, we found that 47% out of studies are compared against variants of themselves.

Finally, statistical methods allows producing trustworthy analyses and reliable results during the comparison. However, it is notorious that only 19% of primary studies are supported by statistical evidence. Pairwise comparison (14%) and multiple comparison (6%) analyses are the most frequent choices. Regarding the pairwise comparison, the t-test is the preferred option (30 out of 62), as well as the Mann–Whitney-U test (19) and the Wilcoxon signed-rank test (16). As for multiple comparison, the Friedman (20 out of 27) and Nemenyi tests (9) are the most recurrent. Some primary studies also combine both types of statistical tests [95, 150, 161, 196, 222, 270, 316, 317, 372, 390, 434]. Finally, it is worth noting that some manuscripts claim that they conduct some sort of statistical analysis, but they do not describe the methodology explicitly [98, 164, 260, 352, 396, 422].

We have also inspected the additional material made available by the authors of the primary study, as a way to check their replicability and reproducibility. Software artefacts are publicly available in 33% of cases. From those, sharing the source code is a common practice (96%), as well as providing some manuals, installation instructions or software guides (77%). In this context, Table 5 shows the top-5 GitHub repositories deployed in the primary studies.Footnote 6 On the one hand, [307] and [129] are related to TPOT and auto-sklearn, respectively, which are approaches for workflow composition. On the other hand, [44] and [144] correspond to tools implementing different methods for hyper-parameter optimisation. Finally, [368] proposes a NAS method. It is worth noting that some tools [44, 129] are still under continuous development according to the frequency of commits.

Focusing on those studies providing software artefacts, in terms of their practicability and usability, it is worth noting that only 11% out of studies provide a software with an end-user friendly graphical user interface, as opposed to those proposals based on a command-line interface (73%). Moreover, in addition to software artefacts, some primary studies (36%) include other types of additional information that complement the manuscript. Among them, the most frequent are the incorporation of reports and attached documents (79%), datasets provided for replicability purposes (4%) or raw data of the experimental outcomes (19%). Finally, it is worth noting the existence of other types of supplementary material (17%) like videos [152, 161, 168, 226, 247, 267, 277, 280, 281, 304, 311, 403, 428, 449], online demo tools [111, 329] or interactive visualisation of results [350, 432].

7 Cross-category data analysis

To complement the analysis in response to RQ3, we have conducted a cross-analysis of the relationships between the different perspectives of our taxonomy: phases, tasks and techniques. This is intended to identify trends, gaps or potential limitations in the current application of AutoML. First, we contrast the data on automated phases with the tasks performed (see Sect. 7.1). Then, we examine possible relationships between tasks and the techniques applied (see Sect. 7.2).

7.1 Phases and tasks

The graphical representation in Fig. 5 illustrates the frequency with which specific tasks (rows) have been used to automate various phases (columns) of the knowledge discovery process. It is important to note that this figure presents a grid of all possible combinations of phases and tasks, which may include some that appear unlikely or even impossible. The relative frequency of application of each task for a phase is depicted following a coloured pattern, where black cells represent frequency equal to 1, i.e. only the referred task has been used in the context of the phase. Cells contain the number of primary studies found for each task-phase pair. As can be observed, if we group phases into preprocessing, data mining and postprocessing, there is no clear trend in terms of which tasks are applied in each category, although the marginal use of ad hoc tasks in the data mining phase can be highlighted, while their use is exclusive in the case of postprocessing.

Focusing on preprocessing, note that domain understanding—an inherently human activity—has been only automated by means of AS (5) and ad hoc (2) tasks, focused on selecting [107, 118, 168, 263, 287] or explicitly generating [111, 402] the best visualisation technique(s) according to the properties of the dataset. Ad hoc tasks are also defined to automate the creation of a tabular dataset with the attribute-value format required by most ML algorithms. In this vein, De Raedt et al. [106] generate a tabular dataset from a dataset formed by a collection of worksheets containing likely incomplete data. Similarly, Mahuele et al. [268] have used linked open data to build this type of datasets. A wider range of tasks have been conducted to automate data cleaning and preprocessing, as well as data reduction and projection. However, both phases coincide in the predominant use of workflow composition tasks and in the limited use or lack of use of HPO tasks. Regarding WC, algorithms handling missing values, normalising data or extracting and selecting features are commonly used for composing workflows. As for HPO, a number of primary studies [74, 93, 200, 377] are considered for data reduction using a NAS method to optimise the architecture of an autoencoder. Having model selection as a primary task, algorithm selection appears in both phases as well. In this case, studies are focused on recommending the best label noise filter [140], providing a feature selection method [148, 316, 317] and, more generally, the best preprocessing algorithm including feature extraction, normalisation, discretisation and missing values methods [59]. Also, although they are not a majority in their respective phases, some primary studies present proposals that perform secondary supporting tasks to evaluate the effectiveness of a cleansing function [278] or to recommend workflows involving both phases [207, 428]. Ad hoc tasks are proposed to fix data inconsistencies [62] and handle missing values in the context of data cleaning [106]. As for data reduction and projection, they are also considered for feature generation either by combining those existing within the original dataset [57, 74, 119, 203, 206, 264, 293, 390, 461] or by extracting them from other structured [341] or unstructured [50] external sources.

In the case of the data mining phase, it can be observed that, regardless of the particular task, classification concentrates the largest number of contributions (391), followed by regression (59). In both cases, the predominance of proposals that carry out NAS is noticeable (229 and 17, respectively). It is worth noting that 70% out of all these NAS proposals have been published since 2020. Also, the use of algorithm-independent proposals for HPO in both classification and regression is relevant. However, only [165, 194, 247, 304, 367, 418, 450] consider both phases in their experimental frameworks. For the rest of primary tasks, workflow composition has a relative importance, mostly for classification. In fact, with the exception of [71], the studies that automate regression also consider classification. Then, focusing on AS, it has covered the three subcategories described in our taxonomy, i.e. single [57, 58, 99, 162, 164, 176, 179, 213, 248, 267, 342, 345, 352, 395, 406], unordered set [205, 338, 344, 411] and ranking [38, 47, 95, 340, 397]. Regarding HPO, neural networks [40, 251, 266, 310, 386, 475] and SVM [63, 76, 283, 289, 401] hyper-parameters are commonly tuned. In the case of clustering and outlier detection, algorithm selection is the most studied task, which has been committed to recommend a single [196, 398] or a ranking [128, 324, 325] of algorithms. Particularly, the only primary studies found for outlier detection are [196] and [237], which builds a SVM model to recommend the best outlier detection method between 14 alternatives and defines a NAS method for their detection, respectively. As for clustering, it has been also automated by HPO [327, 398, 457] and WC [71, 484] studies. Finally, there is no primary study presenting an ad hoc task in this phase, but we could find some supporting tasks for AS, HPO and WC, mainly for classification. In fact, the classification setting is also considered by the approaches addressing regression [207, 301] and clustering [453] problems.

As mentioned in Sect. 6.1, postprocessing activities have been only marginally automated, and always by means of ad hoc tasks. On the one hand, knowledge interpretation has been automated with the aim of explaining machine learning models by exploiting linked open data [389] and generating natural language descriptions [248]. On the other hand, Castro-Lopez et al. [72] defined an approach for knowledge integration that automatically generates the source code of a machine learning model.

7.2 Tasks and techniques

Similar to Sect. 7.1, Fig. 6 shows how often a certain task (rows) has been performed by applying a given technique (columns). Again, the coloured pattern represents the relative frequency of automation of each task by a technique (the darker, the closer to 1). As can be observed, evolutionary algorithms, sequential model-based optimisation (i.e. Bayesian optimisation), multi-armed bandit and meta-learning are the most recurrent techniques to address the largest number of tasks. Next, we discuss all techniques by each particular task.

Focusing on algorithm selection, meta-learning is the most common option (53%), regardless of the number of algorithms being recommended. Other techniques like evolutionary algorithms [57, 342, 345, 406] and greedy search [248, 395] have been also applied. In contrast, recommending an unordered set of algorithms has been performed only with meta-learning techniques [140, 205, 338, 344, 411], with the exception of [118]. It is worth noting the existence of other techniques to recommend either a single or a ranking of algorithms, such as sequential model-based optimisation [162, 406], multi-armed bandit [176, 179, 352], traditional classification [263] and clustering [148], or racing algorithms [164]. Finally, some approaches build ensembles with the best selected algorithms [205, 342].

Sequential model-based optimisation is the most frequently applied technique in algorithm-independent HPO (57%). Similarly to meta-learning in the context of AS, Bayesian optimisation has been established as the predominant technique for black-box optimisation, specially for the last years [19]. Following the algorithm-independent setting, other techniques considered here are evolutionary algorithms [44, 60, 75, 144, 210, 342, 345, 351, 406], multi-armed bandit methods [44, 123, 144, 184, 229, 231, 247, 339, 373], or gradient-based methods [134, 248, 320], among others. In contrast, Bayesian optimisation has been rarely applied for algorithm-dependent HPO [398]. Instead, evolutionary algorithms [40, 63, 401, 475], gradient-based method [167, 266, 310, 386] and swarm intelligence [76, 251, 283, 289] are the preferred. Focusing on NAS, the predominant technique is evolutionary algorithms (25%), as well as transfer learning (22%), gradient-based methods (14%) and reinforcement learning (10%). In the case of gradient-based methods, they have been applied mainly in recent years [25]. Also, in the particular case of transfer learning, it is worth noting that it is generally used jointly to other techniques with the aim of reusing weights of previously trained architectures.

Regarding workflow composition, search and optimisation techniques are the most commonly applied and, more specifically, Bayesian optimisation (31%) and evolutionary algorithms (18%). They have been used to generate knowledge discovery workflows regardless of whether their structure is fixed beforehand [71, 129, 135, 212, 222, 294, 297, 343, 361, 375, 473] or not [57, 61, 121, 122, 204, 211, 273, 307, 311, 331, 333, 356, 484]. However, within the category of search and optimisation techniques, AI planning has been only used to compose workflows whose structure is not preset [204, 207, 285, 295]. Since generating workflows of unbounded size significantly increases the search space, some proposals include mechanisms to overcome this issue, such as meta-learning to warm-start the optimisation process [129] or multi-objective evolutionary algorithms to prioritise workflows with fewer algorithms [307].

Finally, with regard to secondary tasks, we found that ad hoc tasks rely mostly on ML techniques (40%), while supporting tasks are frequently addressed with meta-learning (21%). More specifically, meta-learning has been used in AS to assess the predictive performance of meta-features [58] and also to generate an algorithm portfolio [164]. It has been also applied to predict whether it is worth providing additional resources on hyper-parameter optimisation through both classification [269, 270, 347, 392] and regression [329, 347] algorithms. Furthermore, meta-learning serves to improve the efficiency of the fine-tuning process by identifying the most relevant hyper-parameters [67] or defining a multi-fidelity framework [180]. With respect to WC, different techniques have been applied. Workflow recommendation has been addressed by means of collaborative filtering and auto-experiment [207] and a learning-to-rank method based on association rules [428], whereas conditional parallel coordinates have proven to make the process more interpretable [422]. In the case of ad hoc tasks, the automatic generation of features has been addressed by a large number of techniques like evolutionary algorithms [119, 390], meta-learning [203, 293] and reinforcement learning [206], among others. However, these tasks are commonly performed by techniques falling into the category “Others”, such as link traversal and SPARQL queries for creating tabular datasets [268], or a custom compiler that transforms the predictive model markup language (PMML) definition of ML models into source code [72].

8 Open gaps, challenges and trends

Our findings make it clear that AutoML has drawn significant research attention during the last years. In response to Q4, we identify a number of open gaps and challenges, and speculate on related future trends.

8.1 Phases not covered

Section 6.1 reveals that AutoML has not covered the automation of the whole knowledge discovery process yet, which affects the practicality of the proposals in a realistic context. In fact, proposals have been unevenly distributed among the phases that require automation. Actually, this review has shown that 93% out of the primary studies are focused on the data mining phase. Moreover, most of them are committed to supervised methods, specially classification. This is seemingly motivated by the fact that there is an already labelled dataset and, consequently, it may be more practicable to measure and validate the performance of the automatically generated classifier. In contrast, unsupervised learning has been barely studied and problems like pattern mining are completely unexplored. Preprocessing is covered by 14% of studies, most of which are related to data cleaning and preprocessing, and data reduction and projection. Special mention should be made of those activities of the pre- and postprocessing phases that are inherently human and require intuition and know-how. For example, supporting the comprehensibility of the application domain or creating the target dataset remains a challenge for AutoML. Similarly, explaining and exploiting the acquired knowledge is still an area which requires further study, despite early efforts to explain the generated models in natural language [248] or generate their source code [72]. We speculate that in the near future there will be progress in involving humans in the automation of the different phases of the knowledge extraction process, e.g. with interactive algorithms.

8.2 Lack of interoperability

As noted in Sect. 6.2, most AutoML proposals are committed to the automation of a single phase of the knowledge discovery process. This is particularly common among studies addressing the AS and the HPO of the data mining phase. Similarly, WC proposals generally automate only the preprocessing and data mining phases, leaving aside the support for postprocessing and even some preprocessing steps like domain understanding and dataset creation. As noted above, those proposals that have addressed the automation of these phases have done so in a siloed fashion. At this point, the development of AutoML systems providing comprehensive support for the knowledge discovery process is computationally challenging. However, this holistic view seems necessary if we intend to provide real support for the work of the data scientist, and even support the democratisation of simple data science tasks. Therefore, we foresee that future proposals should consider possible interoperability between different AutoML approaches, thus enabling their reusability and replicability, improving their applicability and greatly facilitating the development and adaptation to the problem of complex workflows.

8.3 Role of the human